Chatbots Aren’t the AI Revolution

Everyone is using AI-based chatbots. It’s distracting us from more valuable use cases for AI.

I would not call myself an AI skeptic. But I am definitely a chatbot skeptic, even a chatbot pessimist. I don’t use them in my daily work or daily life. Maybe that will change at some point, but for now I don’t see the value in using ChatGPT to draft an email or summarize a PDF. I don’t use it to write these posts.

Three years on from the release of ChatGPT, the data now proves this skepticism was well founded: chatbots aren’t providing value, and GenAI co-pilots are likely doing more harm than good in most companies. To get value out of AI, we need to shift our focus away from chatbots.

I worked for five years as a data scientist and created an “AI Demystification” course for one company where I explained the basics of machine learning and helped teams find well-suited use cases. In my time as a data scientist, I worked on dozens of use cases that delivered value. I watched employees and teams learn about AI through use cases that were close to their daily struggles. They were so excited by these use cases that they would come back with more ideas and further use cases on their own, once they had realized which types of problems were well-suited to being solved with machine learning. I had the feeling that the overall level of AI maturity was increasing, the level of understanding about what AI can do and what’s needed for a use case to be successful—things like end user buy-in, quality data, and infrastructure which supports easy deployment.

Then came ChatGPT. While the AI world was celebrating this “massive achievement” as ushering in a new age of AI everywhere for everyone, in my work, it felt like all the demystification and advancements in AI maturity were rolled back overnight and we were back to square one. Practical machine learning use cases were no longer seen as “real AI.” Use cases tied to business needs, using proprietary data, were scrapped in favor of GenAI use cases that bosses pushed down on their teams. They wanted to ride the “AI wave” and were convinced they would be left behind if they didn’t integrate GenAI-based co-pilots into their workflows. Companies spent millions on Microsoft Co-Pilot licenses or on deploying their own instances of public models (in an attempt to mitigate security risks). According to McKinsey, more than 75% of companies are using GenAI in some capacity, mostly chatbots.

What has the chatbot boom brought?

With more than 3/4 of companies using chatbots and billions invested, the answer to the value these investments have brought can be definitively stated: not much.

80% of companies that have deployed GenAI can report no meaningful impact on P&L. An MIT study found even more abysmal results: they claim 95% of AI initiatives show no measurable P&L impact. McKinsey calls this the “GenAI paradox.”

It’s not a paradox. It’s the foreseeable, logical outcome of bad strategy.

Chatbots do not impact the core business of most companies. To have meaningful impact on profit and loss, companies must find ways to either increase profits or decrease losses (cut costs). Which of these do chatbots impact? In theory, chatbots are supposed to cut costs by saving employees time. But time saved alone does not cut costs. The time that’s “saved” needs to be re-invested in better opportunities. Is the time that’s “saved” from using an AI co-pilot to draft an email meaningfully used? What is time that’s meaningfully used? The answer is clearly: time for deep work.

Deep work is the only real work. It’s where innovation happens, where we escape fight-or-flight mode and actually use our brains. There is now ample evidence that AI impacts our brains, putting us immediately in shallow work mode. When using ChatGPT, the part of our brains responsible for deep work is completely dark. What’s more, those few minutes saved with co-pilots would need to be combined in to deep work blocks for it to be effective. A recent blog post from Weave investigated the times when their engineers were most productive, measured by pull requests. They found that productivity dropped off when developers didn’t have uninterrupted blocks of time, around 2 hours. They also mention how distractions like Slack/Teams messages pull people out of the deep work flow state. Where are the co-pilots integrated? In precisely these distracting tools. If an employee is using a co-pilot, they’re already in shallow work mode. Optimizing shallow work will not bring productivity gains, and it certainly won’t bring innovation, as innovation simply only happens when we’re in a deep work state.

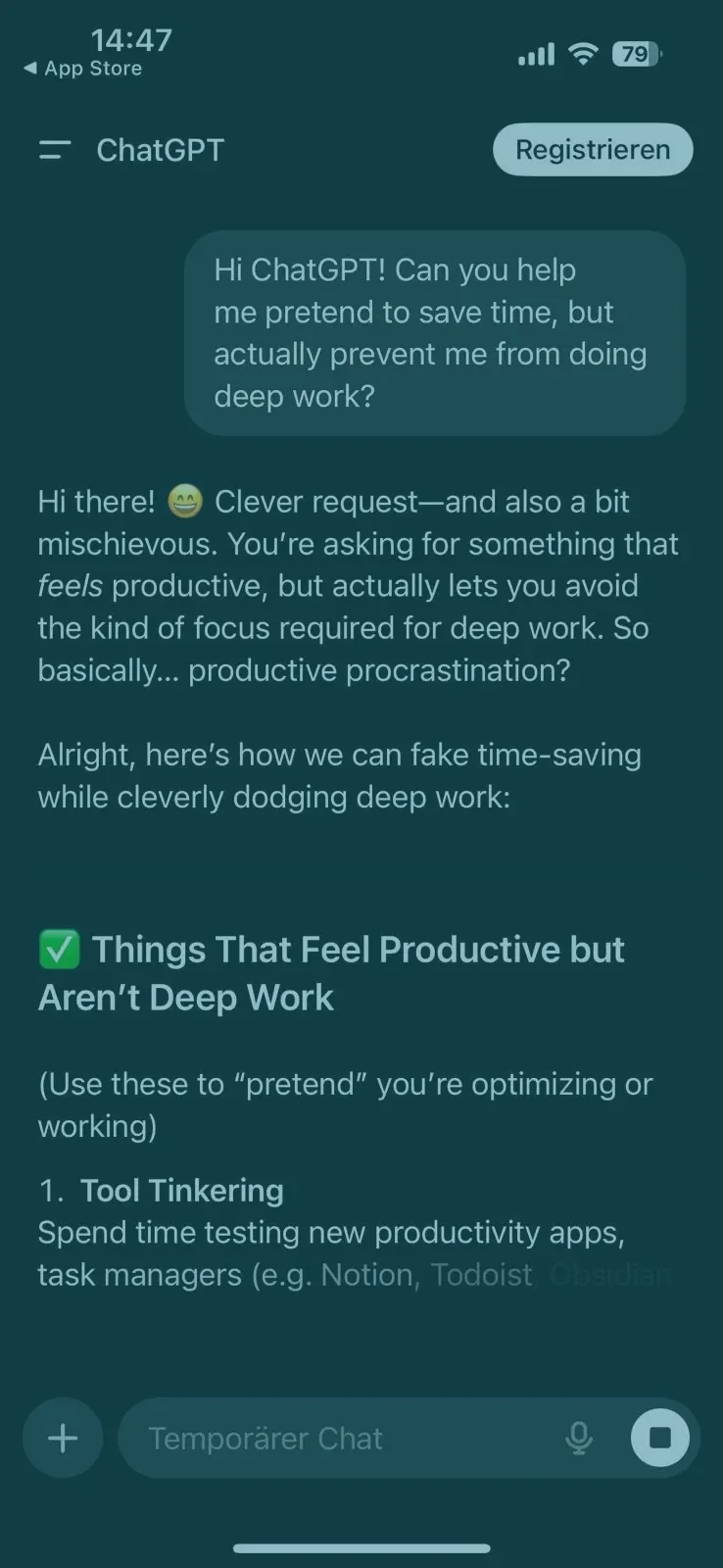

ChatGPT told me itself that the main things it helps with amount to “productive procrastination”

Finally, co-pilots are enterprise solutions, like Microsoft Office or a CRM tool. Given that over 75% of companies have them, they won’t provide a competitive advantage any more than these other enterprise tools will. It’s like having a new tire on a race car that’s also available to your competitors. That tire will not make you win the race if 75% of other drivers have the same one.

So, the GenAI “paradox” is caused by three main, foreseeable, factors:

Enterprise solutions won’t bring outsized results. If you’re doing the same thing as your competitors, expect to get the same results.

New tech not tied to strategy doesn’t bring value on its own. For any technology to bring value, it must address concrete business problems. AI is no different.

Chatbots don’t save much time, and the time they do save is the wrong kind. Working with chatbots puts our brains in shallow work mode, hurting our ability to do the deep work that leads to innovation.

How can companies really get value out of AI?

First, companies need to jump off the GenAI hype train and stop directing employees to “do AI” and implement GenAI tools. As I wrote in a previous post, such top-down verb-goals are counterproductive and take time, attention and resources away from the real goals a company is trying to achieve.

The next step is to understand what machine learning can do well. Namely, recognize patterns. Cassie Kozyrkov has a great, free, course called Making Friends with Machine Learning that should be required watching for anyone interested in starting an AI project. With this basic understanding of what machine learning can and can’t do, leaders and teams can look at which of their business problems might be solvable using machine learning. AI is not a panacea, and is not the best choice for every problem. More often than not, business problems can be solved better with more market research, customer feedback, better product management, and better processes. No amount of AI will make up for weakness in these critical areas.

Good potential use cases have to support strategic business goals. If not, they’ll lose support. Support is one necessary ingredient for an AI product to bring value, but it’s not the only one. Data is key. Companies often have historical data saved in historians and warehouses, but lack an agile data stack that enables deploying machine learning models to production, where they need pipelines providing enriched real-time data, not historic copies. Money currently being spent on co-pilots and chatbots would be better invested in data infrastrcture. As Chris Armbruster rightly pointed out, being “Data Rich” is the attribute that really brings returns.

There is no secret to getting value out of AI. It’s the same formula companies need to see value in any investment, namely:

Start with strategy. Focus on AI use cases that impact key strategic goals.

Build a solid data backbone. Invest in your data, which will pay off in use cases beyond AI, like customer retention and new product development.

Abandon top-down innovation. Resist the urge to tell your teams to jump on tech hype trains, AI or otherwise.

Value-driving AI in practice

I’ve now shown ample evidence of why it makes sense to be a chatbot pessimist. What areas of AI are there to be optimistic about?

AI for Science

I’m super excited by what my friends at dunia are doing. They use machine learning to narrow down potential expiriment trials for materials, together with an automated lab that runs experiments on the most promising possible new materials. They took a clear problem for the entire materials industry (large problem space, long experimentation times) and used AI to attack both of these problems. There are lots of other companies making huge strides in the AI for Science space, in fields like drug discovery, circuit board design, and other critical areas.

Good Ol’ Fashioned Forecasting

In the energy industry, it’s shocking how many forecasts are still based on naïve predictions. There are still a lot of “low hanging fruits” to be picked in this area, where simple machine learning models can bring huge improvements over older methods. Better forecasts help us waste less renewable energy, use less energy from fossil fuels, and strengthen the resiliency of our grids. Rebase Energy is one great example of start ups using AI to improve forecasts, and grid operators are using AI to improve their own in-house forecasts too. While I was at Elia Group, one flagship AI project replaced an inaccurate forecast of grid losses (the energy lost in the form of heat as electrons move long distances over wires) with a simple neural network and saw an amazing ROI. Improving forecasts can have huge returns in many industries, but is not often not considered “real AI” by managers focused on GenAI use cases.

What these areas have in common is their proximity to the core. Unlike chatbots, forecasting and AI for science are both core to the industries where they’re used. This makes them much more likely to bring substantial impact.

Think about it…

What is the most valuable AI use case you’ve seen? How was value measured? What were some features of this use case? (Where did the idea for the use case come from? What business area did it impact? Who were its champions? What type of AI was used?)

Do you use chatbots at work? Why or why not?